In the part 1 and part 2 of this series, we explored how Microsoft Fabric can unlock your SAP data in new and exciting ways. Now it’s time to roll up our sleeves and dive into the practical side of things—integrating your SAP data into Fabric, leveraging Fabric’s powerful data engineering tools, and turning that data into actionable insights.

In this article, we’ll focus our attention on the various options for bringing SAP data into Fabric, from native connectors to 3rd-party solutions that seamlessly integrate with your existing SAP business systems. This is a deep and complex topic, so buckle up and let's dive in.

Integrating SAP Data into Fabric

In order to unlock the full potential of your SAP data, we first need to integrate it into OneLake, the central storage layer of Microsoft Fabric. In this section, we’ll explore the various options for achieving this, starting with Microsoft’s standard connectors, which provide seamless integration with systems like ECC, S/4HANA, and BW. We’ll also examine SAP’s own integration tools and methods, along with partner solutions that offer added flexibility for more complex scenarios. Whether you’re working with legacy systems, modern cloud environments, or a hybrid setup, this section will help you define your ideal SAP integration strategy. You can also find useful information on this topic from Ulrich Christ (program manager for Fabric Data Factory) here.

Out-of-the-Box Connectors

Microsoft provides a pretty broad range of standard connectors that you can use to load SAP data into Fabric/OneLake. These connectors provide robust support for accessing data from SAP systems like ECC, S/4HANA, and BW, ensuring compatibility with both legacy and modern SAP environments. The list of standard connectors includes the following:

This connector is used to fetch data from SAP business systems via remote function calls (RFCs). Underneath the hood, the connector uses the Self-Hosted Integration Runtime (SHIR) and the SAP .NET Connector (NCo) to perform the RFC-based lookups. By default, the connector uses the /SAPDS/RFC_READ_TABLE2 function that’s provided with SAP NetWeaver (via SAP Data Services), but you can create your own lookup functions so long as they conform to the same function interface. Although the connector does support some basic pagination and customization options, it's not ideally suited for mass data consumption.

This connector can be used to fetch data from SAP HANA databases—including SAP S/4 HANA, Business Suite on HANA (SoH), or SAP BW/4HANA system databases as well as SAP HANA sidecar databases. The connector can be used to copy normal database tables as well as data from HANA information models (e.g., analytics and calculation views)

Though not specific to SAP, this standard connector can be used to replicate data directly from your SAP system database—whether you're running on SAP HANA or AnyDB (e.g., SQL Server, Oracle, or DB2). Since this option requires a direct connection to your SAP system database, there are several licensing implications to consider and probably calls for a discussion with your SAP licensing rep to review.

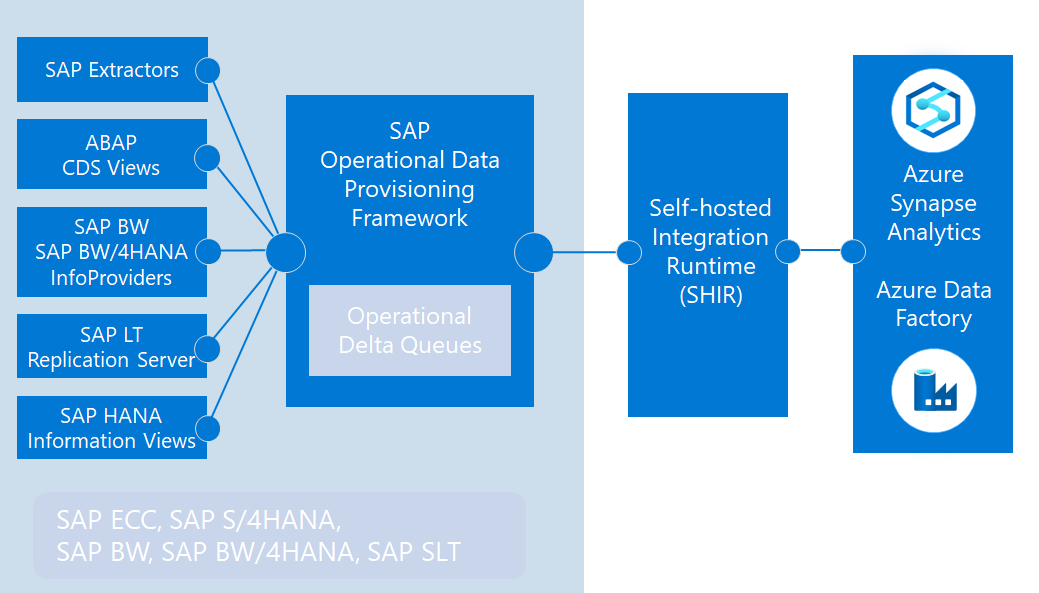

This connector is built on top of SAP’s Operational Data Provisioning (ODP) framework, making it highly versatile. Through ODP, it supports data integration from legacy SAP ECC, EWM, BW systems, SAP BW/4HANA, SAP SLT, and SAP S/4HANA. The “CDC” (change data capture) feature unlocks delta-enabled data extraction, making it ideal for high-volume scenarios. As the most flexible of Microsoft’s standard connectors, it supports mass parallelization and heavy traffic loads, handling millions of records with proven reliability.

Although listed as an ECC connector, this connector could be more accurately described as an OData connector. In other words, it's a connector that can be used to fetch data from SAP ECC (and technically other SAP business systems) via OData-based web service calls. If you happen to have a decent catalog of OData services (and the corresponding SAP Gateway infrastructure), this connector can be a viable option. However, performance-wise, it is not nearly as efficient as the other connectors listed above.

This connector can be used to extract data from legacy SAP BW systems using the SAP BW Open Hub Service. This connector can be a viable option if you're looking to replicate and/or migrate data from SAP BW artifacts such as InfoCubes and DataSources. It should be noted, however, that this connector is not compatible with SAP BW/4HANA.

This connector can be used to copy data from SAP BW data sources—including InfoCubes, QueryCubes, Business Explorer (or BEx) queries—using MultiDimensional eXpression (or MDX) queries. Once again, if you're looking to replicate and/or migrate data from SAP BW systems, this can be a viable option.

This connector can be used to replicate data from SAP Cloud for Customer (SAP C4C) systems.

With all these connector choices, there’s no one-size-fits-all solution—or right or wrong answer—when it comes to deciding which connector(s) to use. The best approach is to select the connector(s) that align most closely with your specific landscape and integration requirements, whether you’re working with SAP ECC, S/4HANA, or some combination of legacy and modern systems.

Figure 1: Microsoft Out-of-the-Box SAP Connectors

It’s also perfectly acceptable, and often strategic, to build an integration plan that leverages multiple connectors to address different use cases or data sources. This flexibility ensures you can tailor your integration strategy to meet your organization’s unique needs.

Finally, before we move on, we should address the SAP-sized elephant in the room. In July of 2024, SAP released note #3255746 which restricted the use of RFC modules in the ODP Data Replication API by 3rd-party applications and called for vendors to re-platform their solutions to work with SAP Datasphere—more on this in a moment.

Figure 2: Integration of the SAP CDC Connector with the ODP Framework

Since the ODP framework is utilized by a host of 3rd-party data integration platforms beyond those developed by Microsoft, suffice it to say that this note was met with a LOT of frustration with customers and partners. Still, it does bring the continued use of the SAP CDC connector into question—at least in its current state.

At the time of this writing, Microsoft is working on another type of CDC connector (in private preview) which leverages the OData interface for ODP that is still supported. The primary challenge here is with performance since RFC-based access is MUCH faster. As this saga continues, we'll come back and update this section with further details as and when they become available.

SAP Integration Solutions

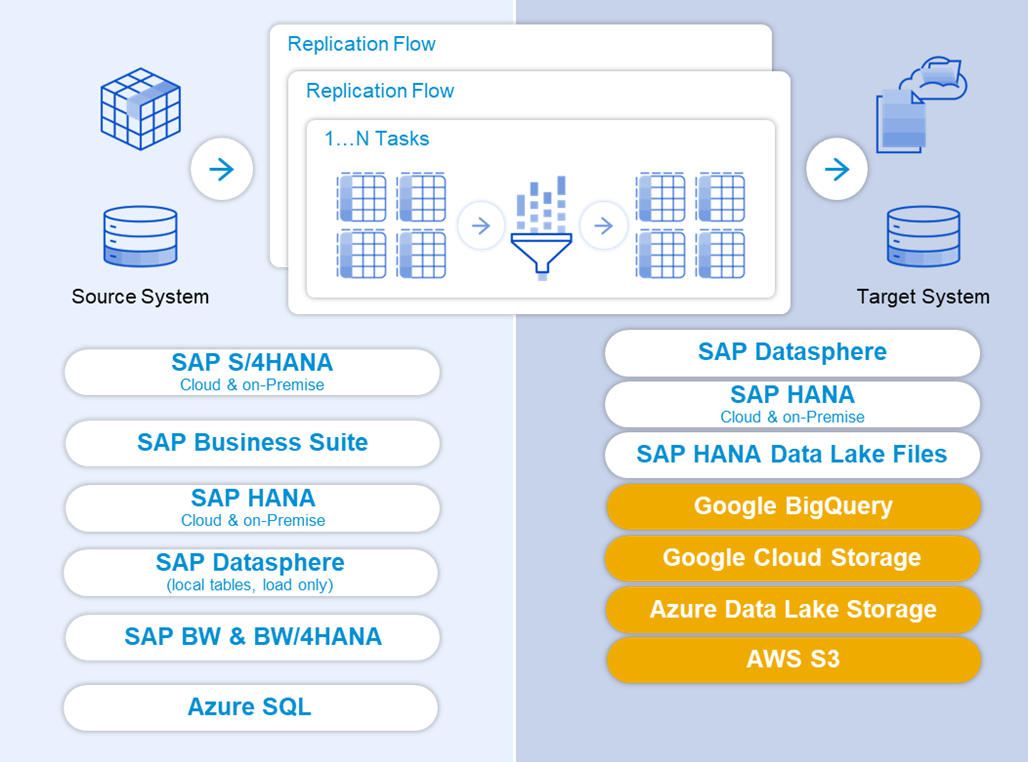

As noted in the previous section, SAP is directing customers to utilize SAP Datasphere to replicate data to non-SAP targets. To simplify this process and provide greater flexibility, SAP has introduced Datasphere Premium Outbound Integration, enabling you to only pay for the resources you use to build and run replication flows. You can find more information about Datasphere pricing here.

As you can see in Figure 3 below, these delta-enabled replication flows can be used to stage SAP data in Azure Data Lake Storage (ADLS Gen 2). Here, it's important to note that while OneLake is actually built on ADLS Gen 2, Datasphere cannot (currently) write directly to OneLake. However, the data's only one hop away and can easily be integrated into OneLake via shortcuts and/or Data Factory pipelines.

Figure 3: SAP Datasphere Premium Outbound Integration Replication Flow Concepts

If you're not ready to move to Datasphere, you can also use familiar data tools such as SAP Landscape Transformation Replication Server (SAP SLT) and SAP Data Servi

ces to build replication flows. These tools also stage SAP data into ADLS Gen 2 storage, so the integration from there into Fabric is straightforward.

Partner Solutions

As Fabric adoption has really taken off in the past 12 months, a number of partners have emerged with replication solutions that offer near real-time integration capabilities. Some notable examples include the following:

Fabric Database Mirroring

While SAP and integration partners were busy navigating the data replication and connector wars over the past few months, another exciting development has been unfolding in the Fabric space. In late 2024, Microsoft announced the general availability of database mirroring for several popular data platforms (e.g., Azure SQL, CosmosDB, and Snowflake). Around this same time, Microsoft also formally introduced Open Mirroring in Microsoft Fabric, enabling partners to build/integrate database mirroring solutions in an open and standardized way. In the video below, Ulrich Christ provides an excellent overview and high-level demonstration of how the Open Mirroring framework works.

These are exciting times as many of the vendors noted above are developing mirroring solutions that will ultimately make SAP data integration into Fabric a breeze. In fact, you can see a couple of live demos in the video below from the SAP on Azure video podcast. We'll have much more to say on this topic in the coming weeks.

Closing Thoughts

In this article, we explored the various options for bringing SAP data into Fabric, from leveraging out-of-the-box connectors from Microsoft and partner solutions to SAP-centric alternatives based on products like SAP Datasphere and Data Services. Each approach offers unique advantages, enabling you to tailor your integration strategy to your specific needs.

Once your SAP data is staged—whether in Azure Data Lake Storage or directly into OneLake—you now have the flexibility to transform and analyze it using Fabric’s powerful tools. With that in mind, in my next post we'll explore ways of putting your SAP data to work using Fabric's powerful data engineering tools.